We use a fast imaging method called holographic microscopy to watch self-assembling systems. In a holographic microscope, the sample is illuminated by laser light, and the resulting image (or hologram) can be used to determine the 3D structure, position, and orientation of a microscopic sample. We build new holographic microscopes and develop software to analyze the holograms.

An introduction to holography

Dennis Gabor developed holography in the 1940s as a way to improve the resolving power of electron microscopes. However, his ideas apply to imaging with any coherent source. With the development of lasers in the 1960s, it became possible to do holographic recording and reconstruction with visible light and photographic film. Later, when CCD and CMOS cameras arrived on the scene, holograms could be recorded digitally. Today, with high-speed computers, we can not only record holograms digitally, but also reconstruct them digitally as well.

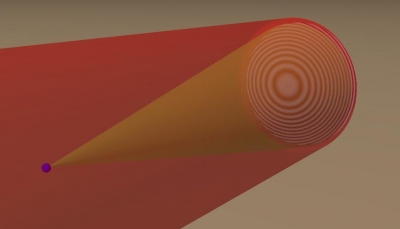

To understand holography, we need to consider the wave nature of light. At any point in space, a light wave can be described by an amplitude and phase. Whereas a conventional photograph captures the intensity of light reflected or scattered from an object, a hologram can capture both amplitude and phase. This is because the hologram is recorded by using two light beams, one of which (called the object beam) is scattered from the object, and other (called the reference beam) is aimed at the camera. If the two waves are coherent (meaning the phase is well-defined at each point in space), they can interfere, or beat, with each other to produce interference fringes, like this:

In the picture above, the small blue particle is illuminated by the red beam. It scatters light into an object beam, shown as a cone radiating from the sphere. That object beam interferes with the red beam to produce a fring pattern, shown on the right side of the image.

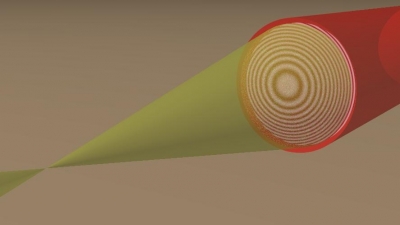

The fringes encode information about the phase of light and, implicitly, about the position and structure of the object. That information can be recovered through reconstruction. The simplest way to recover the 3D information is to record the hologram as an intensity pattern on a camera. If, say, we take the image and print it on photographic film, we can then shine light back through it. The hologram will diffract the light so that an image of the object will become visible:

This can most easily be understood if one imagines recording the hologram of a spherically scattered wave (like the light scattered from a microscopic particle). If one interferes that spherical wave coming from the object with a plane wave, a pattern of concentric rings will be observed. These fringes will resemble a Fresnel zone plate. And, just like a Fresnel zone plate, the fringes will focus a plane wave illuminating it to a point.

In our lab, we build holographic microscopes, and we develop techniques for doing 3D reconstruction on a computer. Below are some of the techniques we use.

In-line holographic microscopy

The in-line holographic microscope operates as described above. A laser illuminates the sample, which is typically a colloidal or biological sample suspended in a liquid. Some of that light passes through the sample and acts as the reference beam, and some light is scattered by the object. We record the hologram on a CMOS camera, and we reconstruct it on a computer to recover 3D information about the sample. We can do the numerical equivalent of shining the reference beam back on the hologram and looking at the diffracted image, or, if we know what our object is beforehand, we can numerically fit a scattering model to the observed hologram using our software package, HoloPy.

We are currently able to track micron-scale spheres to a precision of less than 1 nm precision in all three dimensions, at high frame rates (over 5000 frames/second). We are also able to track clusters of spheres, and non-spherical particles and their rotations in 3-dimensions to a spatial precision of about 10 nm . Below is a video showing (on the left) a series of holograms taken of a 0.37 x 1.05 um polystyrene ellipsoid diffusing in water and (on the right) a rendering of the particle, showing the 3D position and orientation we infer from fitting a model to the recorded holograms. The movie is sped up by a factor of 5 from real time.